As AI research and technology development continue to advance, there is also a need to account for the energy and infrastructure resources required to manage large datasets and execute difficult computations. When we look to nature for models of efficiency, the human brain stands out, resourcefully handling complex tasks. Inspired by this, researchers at Microsoft are seeking to understand the brain’s efficient processes and replicate them in AI.

At Microsoft Research Asia (opens in new tab), in collaboration with Fudan University (opens in new tab), Shanghai Jiao Tong University (opens in new tab), and the Okinawa Institute of Technology (opens in new tab), three notable projects are underway. One introduces a neural network that simulates the way the brain learns and computes information; another enhances the accuracy and efficiency of predictive models for future events; and a third improves AI’s proficiency in language processing and pattern prediction. These projects, highlighted in this blog post, aim not only to boost performance but also significantly reduce power consumption, paving the way for more sustainable AI technologies.

CircuitNet simulates brain-like neural patterns

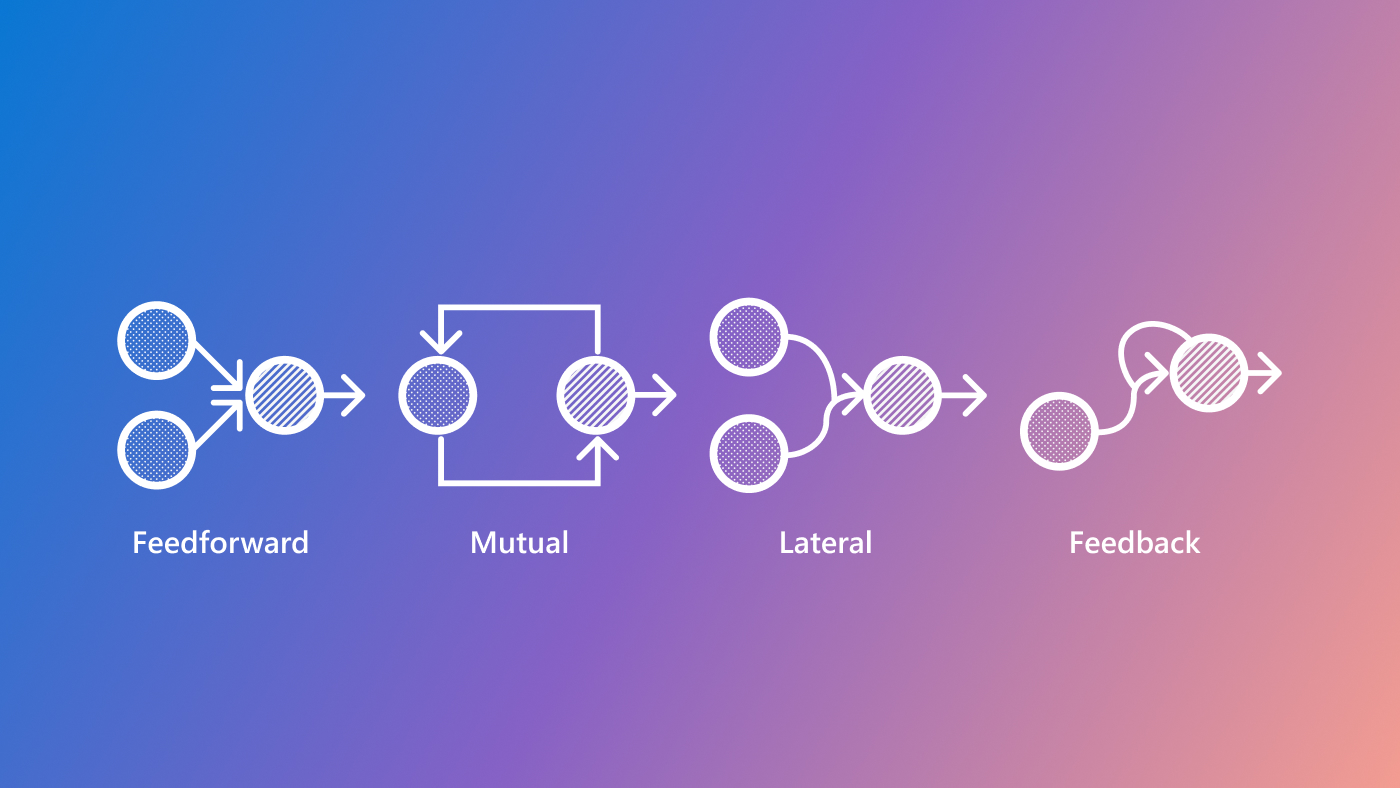

Many AI applications rely on artificial neural networks, designed to mimic the brain’s complex neural patterns. These networks typically replicate only one or two types of connectivity patterns. In contrast, the brain propagates information using a variety of neural connection patterns, including feedforward excitation and inhibition, mutual inhibition, lateral inhibition, and feedback inhibition (Figure 1). These networks contain densely interconnected local areas with fewer connections between distant regions. Each neuron forms thousands of synapses to carry out specific tasks within its region, while some synapses link different functional clusters—groups of interconnected neurons that work together to perform specific functions.

Inspired by this biological architecture, researchers have developed CircuitNet, a neural network that replicates multiple types of connectivity patterns. CircuitNet’s design features a combination of densely connected local nodes and fewer connections between distant regions, enabling enhanced signal transmission through circuit motif units (CMUs)—small, recurring patterns of connections that help to process information. This structure, shown in Figure 2, supports multiple rounds of signal processing, potentially advancing how AI systems handle complex information.

Evaluation results are promising. CircuitNet outperformed several popular neural network architectures in function approximation, reinforcement learning, image classification, and time-series prediction. It also achieved comparable or better performance than other neural networks, often with fewer parameters, demonstrating its effectiveness and strong generalization capabilities across various machine learning tasks. Our next step is to test CircuitNet’s performance on large-scale models with billions of parameters.

Spiking neural networks: A new framework for time-series prediction

Spiking neural networks (SNNs) are emerging as a powerful type of artificial neural network, noted for their energy efficiency and potential application in fields like robotics, edge computing, and real-time processing. Unlike traditional neural networks, which process signals continuously, SNNs activate neurons only upon reaching a specific threshold, generating spikes. This approach simulates the way the brain processes information and conserves energy. However, SNNs are not strong at predicting future events based on historical data, a key function in sectors like transportation and energy.

To improve SNN’s predictive capabilities, researchers have proposed an SNN framework designed to predict trends over time, such as electricity consumption or traffic patterns. This approach utilizes the efficiency of spiking neurons in processing temporal information and synchronizes time-series data—collected at regular intervals—and SNNs. Two encoding layers transform the time-series data into spike sequences, allowing the SNNs to process them and make accurate predictions, shown in Figure 3.

Tests show that this SNN approach is very effective for time-series prediction, often matching or outperforming traditional methods while significantly reducing energy consumption. SNNs successfully capture temporal dependencies and model time-series dynamics, offering an energy-efficient approach closely aligns with how the brain processes information. We plan to continue exploring ways to further improve SNNs based on the way the brain processes information.

Refining SNN sequence prediction

While SNNs can help models predict future events, research has shown that its reliance on spike-based communication makes it challenging to directly apply many techniques from artificial neural networks. For example, SNNs struggle to effectively process rhythmic and periodic patterns found in natural language processing and time-series analysis. In response, researchers developed a new approach for SNNs called CPG-PE, which combines two techniques:

- Central pattern generators (CPGs): Neural networks in the brainstem and spinal cord that autonomously generate rhythmic patterns, controlling function like moving, breathing, and chewing

- Positional encoding (PE): A process that helps artificial neural networks discern the order and relative positions of elements within a sequence

By integrating these two techniques, CPG-PE helps SNNs discern the position and timing of signals, improving their ability to process time-based information. This process is shown in Figure 4.

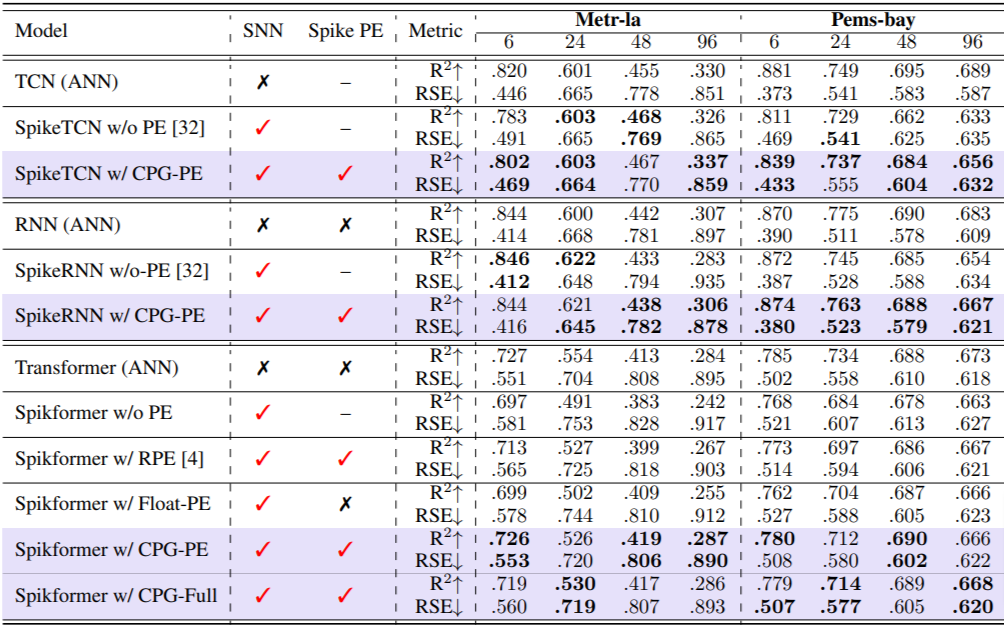

We evaluated CPG-PE using four real-world datasets: two covering traffic patterns, and one each for electricity consumption and solar energy. Results demonstrate that SNNs using this method significantly outperform those without positional encoding (PE), shown in Table 1. Moreover, CPG-PE can be easily integrated into any SNN designed for sequence processing, making it adaptable to a wide range of neuromorphic chips and SNN hardware.

Ongoing AI research for greater capability, efficiency, and sustainability

The innovations highlighted in this blog demonstrate the potential to create AI that is not only more capable but also more efficient. Looking ahead, we’re excited to deepen our collaborations and continue applying insights from neuroscience to AI research, continuing our commitment to exploring ways to develop more sustainable technology.