The situated interaction research effort aims to enable computers to reason more deeply about their surroundings, and engage in fluid interaction with humans in physically situated settings. When people interact with each other, they engage in a rich, highly coordinated, mixed-initiative process, regulated through both verbal and non-verbal channels. In contrast, while their perceptual abilities are improving, computers are still unaware of their physical surroundings and of the “physics” of human interaction. Current human-computer interaction models assume there is a single user, engaging with full attention on a single computer system. Computers do not yet understand engagement, attention, proximity, interruptability, turn-taking, group dynamics, social expectations, human memory and goals, and so on. Our research aims to address these challenges and create the basis for a new generation of situated systems that are capable of fluid interactions and collaborations with people.

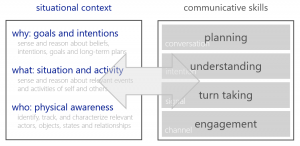

A primary research area for our group is situated language interaction. Historically, a lot of focus in the dialog community has been on speech recognition and dialog control. In physically situated settings, robots and virtual agents require several additional competencies. Interactions in the open-world typically involve multiple participants; people who come and go, and interleaved interactions with each other and the system. Situated systems therefore need to regulate attention and conversational engagement. Similarly, simple turn-taking models like “you speak, then I speak” need to evolve into more sophisticated multi-party turn-taking models. At the higher levels, the actions of others (whether verbal, non-verbal, or physical) need to be understood within the broader situational context and the systems need to continuously coordinate their own actions with the actions of all the other participants.

In our work, we develop computational models for situated communicative processes anchored into reasoning about the surrounding context. The work ranges from developing representations (for example, what are key variables for reasoning about turn-taking in a multiparty conversation?) to constructing inference models (for example, who will start talking next?) to decision making (for example, should I start talking now?) and all the way to execution (for example, how should I say this. given what everyone else is doing?).

-

Leah Perlmutter

Zhou Yu

Sean Andrist

Shray Bansal

Oriol Vinyals

Stephanie Rosenthal

Richard Roberts

William Wang

Walter Lasecki

人员

Research Team

Sean Andrist

Principal Researcher

Dan Bohus

Senior Principal Researcher

Eric Horvitz

Chief Scientific Officer

Nick Saw

Principal Research Software Engineer

Andy Wilson

Partner Research Manager

Alumni

Anne Loomis Thompson

Principal Research Software Engineer