Recent advances in machine learning and signal processing, as well as the availability of massive computing power, have resulted in dramatic and steady improvement in speech recognition accuracy. Voice interfaces to digital devices have become more and more common. Lectures and online conversations can be transcribed using the live caption and translation features of PowerPoint, Microsoft Teams, and Skype. The speech technology community, including those of us at Microsoft, continues to innovate, pushing the envelope and expanding the application areas of the technology.

One of our long-term efforts aims to transcribe natural conversations (that is, recognizing “who said what”) from far-field recordings. Earlier this year, we announced Conversation Transcription, a new capability of Speech Services that is part of the Microsoft Azure Cognitive Services family. This feature is currently in private preview. To achieve reasonable speech recognition and speaker attribution accuracy in a wide range of far-field settings, microphone arrays are often required.

Spotlight: blog post

As researchers in the Microsoft Speech and Dialog Research Group, we’re looking to make the benefits of transcription—such as closed captioning for colleagues who are deaf or hard of hearing—more broadly accessible. We will be presenting our paper, “Meeting Transcription Using Asynchronous Distant Microphones,” at Interspeech 2019, which provides a foundation for our demo at the Microsoft Build 2019 developers conference earlier this year. The research team working on this project includes Takuya Yoshioka, Dimitrios Dimitriadis, Andreas Stolcke, William Hinthorn, Zhuo Chen, Michael Zeng, and Xuedong Huang. Our paper shows the potential to allow meeting participants to use multiple, readily available devices, already equipped with microphones, instead of specially designed microphone arrays.

Using technology from our pockets and bags for accurate transcription

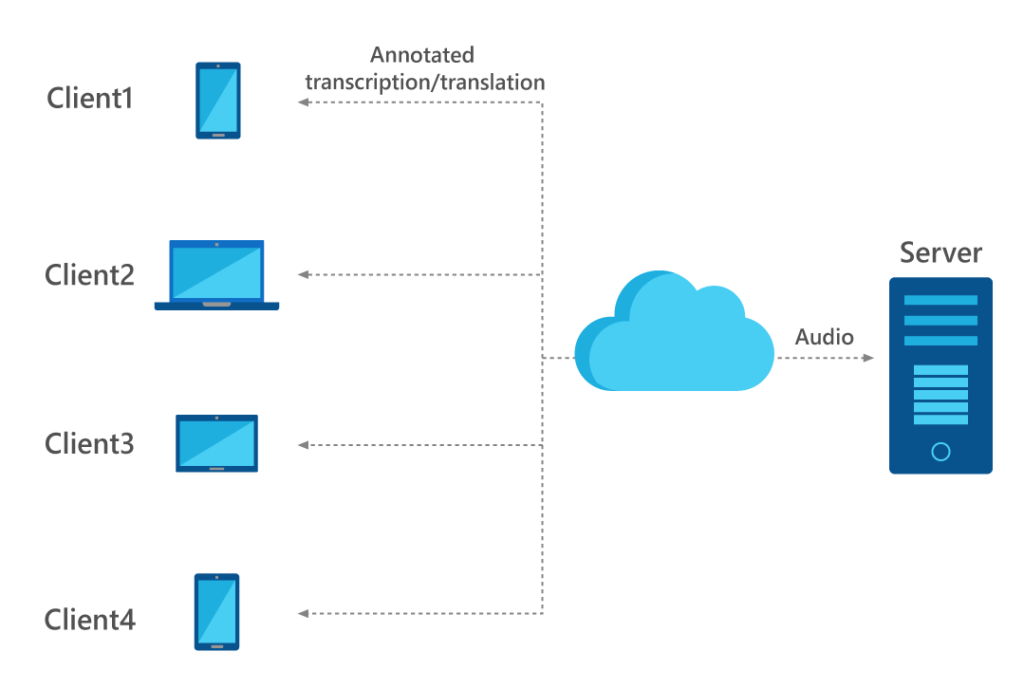

The central idea behind our approach is to leverage any internet-connected devices, such as the laptops and smart phones that attendees typically bring to meetings, and virtually form an ad hoc microphone array in the cloud. With our approach, teams would be able to choose to use the cell phones, laptops, and tablets they already bring to meetings to enable high-accuracy transcription without needing special-purpose hardware.

While the idea sounds simple, it requires overcoming many technical challenges to be effective. The audio quality of devices varies significantly. The speech signals captured by different microphones are not aligned with each other. The number of devices and their relative positions are unknown. For these reasons and others, consolidating the information streams from multiple independent devices in a coherent way is much more complicated than it may seem. In fact, although the concept of ad hoc microphone arrays dates back to the beginning of this century, to our knowledge it has not been realized as a product or public prototype so far. Meanwhile, techniques for combining multiple information streams were developed in different research areas. At the same time, general advances in speech recognition, especially via the use of neural network models, have helped bring transcription accuracy closer to usable levels.

Harnessing the power of ad hoc microphone arrays: From blind beamforming to system combination

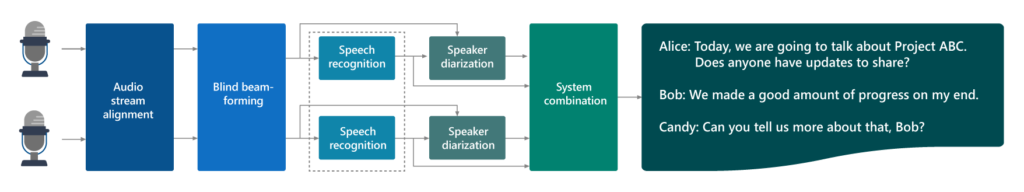

As described in our paper, we developed an end-to-end system by putting all relevant components together to evaluate the feasibility of transcribing meetings with ad hoc microphone arrays and optimize the way to combine the different elements.

The diagram shown above depicts the resulting processing pipeline. It starts with aligning signals from different microphones, followed by blind beamforming. The term “blind” refers to the fact that beamforming is achieved without any knowledge about the microphones and their locations. This is achieved by using neural networks optimized to recover input features for acoustic models, as we reported previously. This beamformer generates multiple signals so that the downstream modules (speech recognition and speaker diarization) can still leverage the acoustic diversity offered by the random microphone placement. After speech recognition and speaker diarization, the speaker-annotated transcripts from multiple streams are consolidated by combining confusion networks that encode both word and speaker hypotheses and they are sent back to the meeting attendees. After the meeting, the attendees can choose to keep the transcripts available only to themselves or share them with specified people.

Our system outperforms a single-device system by 14.8% and 22.4% with three and seven microphones respectively. A version of the system runs in real time as demonstrated here. Experimental results on publicly available NIST meeting test data are also reported in an extended version of the paper, which we published online.

The work published at Interspeech 2019 is part of a longer focused effort, codenamed Project Denmark. Many interesting challenges remain to be investigated, such as the separation of overlapping speech, the end-to-end modeling and training of the entire system, and supporting accurate speaker attribution for people who want to be recognized in transcriptions while ensuring that others can freely choose to remain anonymous. More results will be coming, and we encourage you to visit the project page, check out related publications, and stay tuned for further developments with this technology. For those attending Interspeech 2019, we are looking forward to discussions with our colleagues in the research community.

We will be presenting our research at Interspeech 2019 at 10:00am on Tuesday, September 17, during the “Rich Transcription and ASR Systems” session.