Neural Text-to-Speech—along with recent milestones in computer vision and question answering—is part of a larger Azure AI mission to provide relevant, meaningful AI solutions and services that work better for people because they better capture how people learn and work—with improved vision, knowledge understanding, and speech capabilities. At the center of these efforts is XYZ-code, a joint representation of three cognitive attributes: monolingual text (X), audio or visual sensory signals (Y), and multilingual (Z). For more information about these efforts, read the XYZ-code blog post.

Neural Text-to-Speech (opens in new tab) (Neural TTS), a powerful speech synthesis capability of Azure Cognitive Services (opens in new tab), enables developers to convert text to lifelike speech. It is used in voice assistant scenarios, content read aloud capabilities, accessibility tools, and more. Neural TTS has now reached a significant milestone in Azure, with a new generation of Neural TTS model called Uni-TTSv4, whose quality shows no significant difference from sentence-level natural speech recordings.

Microsoft debuted the original technology three years ago, with close to human-parity (opens in new tab) quality. This resulted in TTS audio that was more fluid, natural sounding, and better articulated. Since then, Neural TTS has been incorporated into Microsoft flagship products such as Edge Read Aloud (opens in new tab), Immersive Reader (opens in new tab), and Word Read Aloud (opens in new tab). It’s also been adopted by many customers such as AT&T (opens in new tab), Duolingo (opens in new tab), Progressive (opens in new tab), and more. Users can choose from multiple pre-set voices or record and upload their own sample to create custom voices instead. Over 110 languages are supported, including a wide array of language variants, also known as locales.

Microsoft research podcast

Collaborators: Silica in space with Richard Black and Dexter Greene

College freshman Dexter Greene and Microsoft research manager Richard Black discuss how technology that stores data in glass is supporting students as they expand earlier efforts to communicate what it means to be human to extraterrestrials.

The latest version of the model, Uni-TTSv4, is now shipping into production on a first set of eight voices (shown in the table below). We will continue to roll out the new model architecture to the remaining 110-plus languages and Custom Neural Voice (opens in new tab) in the coming milestone. Our users will automatically get significantly better-quality TTS through the Azure TTS API (opens in new tab), Microsoft Office, and Edge browser.

Measuring TTS quality

Text-to-speech quality is measured by the Mean Opinion Score (MOS), a widely recognized scoring method for speech quality evaluation. For MOS studies, participants rate speech characteristics for both recordings of peoples’ voices and TTS voices on a five-point scale. These characteristics include sound quality, pronunciation, speaking rate, and articulation. For any model improvement, we first conduct a side-by-side comparative MOS test (CMOS (opens in new tab)) with production models. Then, we do a blind MOS test on the held-out recording set (recordings not used in training) and the TTS-synthesized audio and measure the difference between the two MOS scores.

During research of the new model, Microsoft submitted the Uni-TTSv4 system to Blizzard Challenge 2021 under its code name, DelightfulTTS. Our paper, “DelightfulTTS: The Microsoft Speech Synthesis System for Blizzard Challenge 2021,” provides in-depth detail of our research and the results. The Blizzard Challenge is a well-known TTS benchmark organized by world-class experts in TTS fields, and it conducts large-scale MOS tests on multiple TTS systems with hundreds of listeners. Results from Blizzard Challenge 2021 demonstrate that the voice built with the new model shows no significant difference from natural speech on the common dataset.

Measurement results for Uni-TTSv4 and comparison

The MOS scores below are based on samples produced by the Uni-TTSv4 model under the constraints of real-time performance requirements.

A Wilcoxon signed-rank test (opens in new tab) was used to determine whether the MOS scores differed significantly between the held-out recordings and TTS. A p-value less than 0.05 (typically ≤ 0.05) is statistically significant and a p-value higher than 0.05 (> 0.05) is not statistically significant. A positive CMOS number shows gain over production, which shows it is more highly preferred by people judging the voice in terms of naturalness.

| Locale (voice) | Human recording (MOS) | Uni-TTSv4 (MOS) | Wilcoxon p-value | CMOS vs PROD |

|---|---|---|---|---|

| En-US (Jenny) | 4.33(±0.04) | 4.29(±0.04) | 0.266 | +0.116 |

| En-US (Sara) | 4.16(±0.05) | 4.12 (±0.05) | 0.41 | +0.129 |

| Zh-CN (Xiaoxiao) | 4.54(±0.05) | 4.51(±0.05) | 0.44 | +0.181 |

| It-IT (Elsa) | 4.59(±0.04) | 4.58(±0.03) | 0.34 | +0.25 |

| Ja-JP (Nanami) | 4.44(±0.04) | 4.37(±0.05) | 0.053 | +0.19 |

| Ko-KR(Sun-hi) | 4.24(±0.06) | 4.15(±0.06) | 0.11 | +0.097 |

| Es-ES (Alvaro) | 4.36(±0.05) | 4.33(±0.04) | 0.312 | +0.18 |

| Es-MX (Dalia) | 4.45 (±0.05) | 4.39(±0.05) | 0.103 | +0.076 |

A comparison of human and Uni-TTSv4 audio samples

Listen to the recording and TTS samples below to hear the quality of the new model. Note that the recording is not part of the training set.

These voices are updated to the new model in the Azure TTS online service. You can also try the demo (opens in new tab) with your own text. More voices will be upgraded to Uni-TTSv4 later.

En-US (Jenny)

The visualizations of the vocal quality continue in a quartet and octet.

Human recording

Uni-TTSv4

En-US (Sara)

Like other visitors, he is a believer.

Human recording

Uni-TTSv4

Zh-CN (Xiaoxiao)

另外,也要规避当前的地缘局势风险,等待合适的时机介入。

Human recording

Uni-TTSv4

It-IT (Elsa)

La riunione del Consiglio di Federazione era prevista per ieri.

Human recording

Uni-TTSv4

Ja-JP (Nanami)

責任はどうなるのでしょうか?

Human recording

Uni-TTSv4

Ko-KR (Sun-hi)

그는 마지막으로 이번 앨범 활동 각오를 밝히며 인터뷰를 마쳤다

Human recording

Uni-TTSv4

Es-ES (Alvaro)

Al parecer, se trata de una operación vinculada con el tráfico de drogas.

Human recording

Uni-TTSv4

Es-MX (Dalia)

Haber desempeñado el papel de Primera Dama no es una tarea sencilla.

Human recording

Uni-TTSv4

How Uni-TTSv4 works to better represent human speech

Over the past 3 years, Microsoft has been improving its engine to make TTS that more closely aligns with human speech. While the typical Neural TTS quality of synthesized speech has been impressive, the perceived quality and naturalness still have space to improve compared to human speech recordings. We found this is particularly the case when people listen to TTS for a while. It is in the very subtle nuances, such as variations in tone or pitch, that people are able to tell whether a speech is generated by AI.

Why is it so hard for a TTS voice to reflect human vocal expression more closely? Human speech is usually rich and dynamic. With different emotions and in different contexts, a word is spoken differently. And in many languages this difference can be very subtle. The expressions of a TTS voice are modeled with various acoustic parameters. Currently it is not very efficient for those parameters to model all the coarse-grained and fine-grained details on the acoustic spectrum of human speech. TTS is also a typical one-to-many mapping problem where there could be multiple varying speech outputs (for example, pitch, duration, speaker, prosody, style, and others) for a given text input. Thus, modeling such variation information is important to improve the expressiveness and naturalness of synthesized speech.

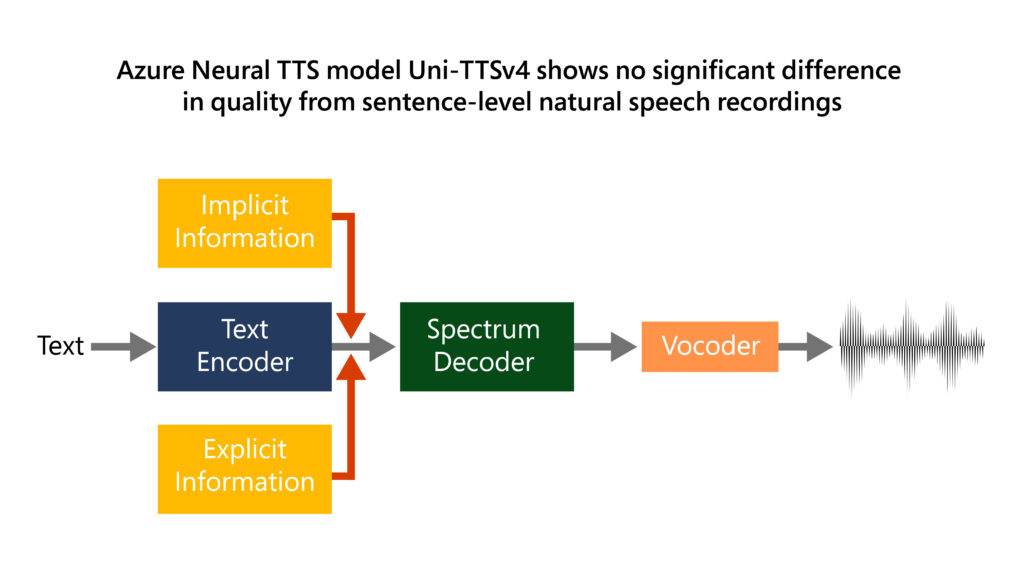

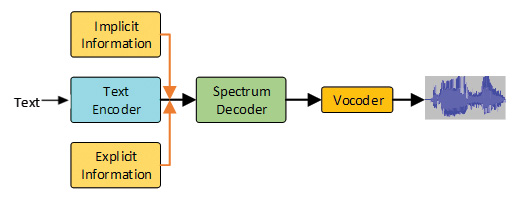

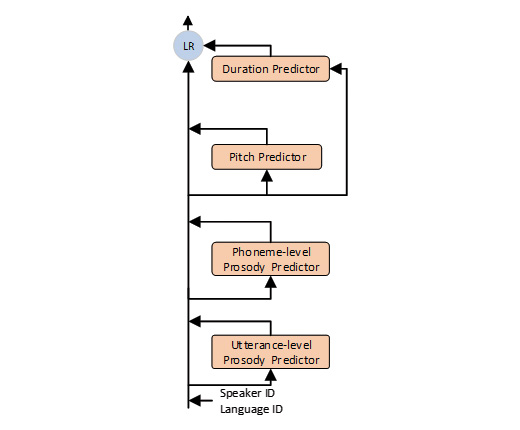

To achieve these improvements in quality and naturalness, Uni-TTSv4 introduces two significant updates in acoustic modeling. In general, transformer models learn the global interaction while convolutions efficiently capture local correlations. First, there’s a new architecture with transformer and convolution blocks, which better model the local and global dependencies in the acoustic model. Second, we model variation information systematically from both explicit perspectives (speaker ID, language ID, pitch, and duration) and implicit perspectives (utterance-level and phoneme-level prosody). These perspectives use supervised and unsupervised learning respectively, which ensures end-to-end audio naturalness and expressiveness. This method achieves a good balance between model performance and controllability, as illustrated below:

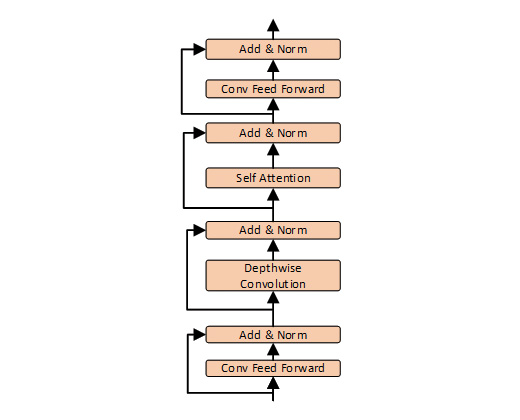

To achieve better voice quality, the basic modelling block needs fundamental improvement. The global and local interactions are especially important for non-autoregressive TTS, considering it has a longer output sequence than machine translation or speech recognition in the decoder, and each frame in the decoder cannot see its history as the autoregressive model does. So, we designed a new modelling block which combines the best of transformer and convolution, where self-attention learns the global interaction while the convolutions efficiently capture the local correlations.

The new variance adaptor, based on FastSpeech2, introduces a hierarchical implicit information modelling pipeline from utterance-level prosody and phoneme-level prosody perspectives, together with the explicit information like duration, pitch, speaker ID, and language ID. Modeling these variations can effectively mitigate the one-to-many mapping problem and improves the expressiveness and fidelity of synthesized speech.

We use our previously proposed HiFiNet (opens in new tab)—a new generation of Neural TTS vocoder—to convert spectrum into audio samples.

For more details of the above system, refer to the paper.

Working to advance AI with XYZ-code in a responsible way

We are excited about the future of Neural TTS with human-centric and natural-sounding quality under the XYZ-Code (opens in new tab) AI framework. Microsoft is committed to the advancement and use of AI grounded in principles that put people first and benefit society. We are putting these Microsoft AI principles (opens in new tab) into practice throughout the company and strongly encourage developers to do the same. For guidance on deploying AI responsibly, visit Responsible use of AI with Cognitive Services (opens in new tab).

Get started with Neural TTS in Azure

Neural TTS in Azure offers over 270 neural voices across over 110 languages (opens in new tab) and locales. In addition, the capability enables organizations to create a unique brand voice in multiple languages and styles. To explore the capabilities of Neural TTS with some of its different voice offerings, try the demo (opens in new tab).

For more information:

- Read our documentation (opens in new tab).

- Check out our sample code (opens in new tab).

- Check out the code of conduct (opens in new tab) for integrating Neural TTS into your apps.

Acknowledgments

The research behind Uni-TTSv4 was conducted by a team of researchers from across Microsoft, including Yanqing Liu, Zhihang Xu, Xu Tan, Bohan Li, Xiaoqiang Wang, Songze Wu, Jie Ding, Peter Pan, Cheng Wen, Gang Wang, Runnan Li, Jin Wu, Jinzhu Li, Xi Wang, Yan Deng, Jingzhou Yang, Lei He, Sheng Zhao, Tao Qin, Tie-Yan Liu, Frank Soong, Li Jiang, Xuedong Huang with the support from all the Azure Speech and Cognitive Services team members, Integrated Training Platform, and ONNX Runtime teams (opens in new tab) for making this great accomplishment possible.