Editor’s note: This post and its research are the collaborative efforts of our team, which includes Andrew Ilyas (PhD Student, MIT), Logan Engstrom (PhD Student, MIT), Aleksander Mądry (Professor at MIT), Ashish Kapoor (Partner Research Manager).

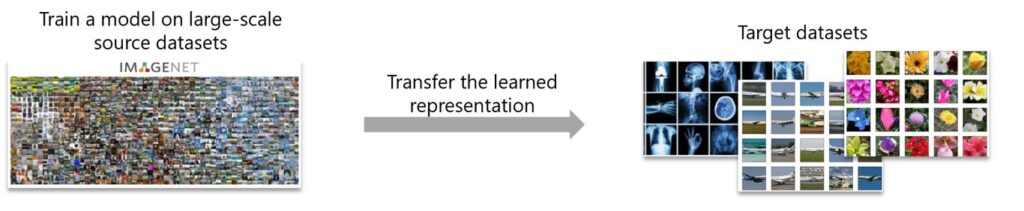

In practical machine learning, it is desirable to be able to transfer learned knowledge from some “source” task to downstream “target” tasks. This is known as transfer learning—a simple and efficient way to obtain performant machine learning models, especially when there is little training data or compute available for solving the target task. Transfer learning is very useful in practice. For example, transfer learning allows perception models on a robot (opens in new tab) or other autonomous system (opens in new tab) to be trained on a synthetic dataset generated via a high-fidelity simulator, such as AirSim (opens in new tab), and then refined on a small dataset collected in the real world.

Transfer learning is also common in many computer vision tasks, including image classification and object detection, in which a model uses some pretrained representation as an “initialization” to learn a more useful representation for the specific task in hand. In a recent collaboration with MIT, we explore adversarial robustness as a prior for improving transfer learning in computer vision. We find that adversarially robust models outperform their standard counterparts on a variety of downstream computer vision tasks.

Read Paper (opens in new tab) Code & Models (opens in new tab)

In our work we focus on computer vision and consider a standard transfer learning pipeline: “ImageNet pretraining.” This pipeline trains a deep neural network on ImageNet (opens in new tab), then tweaks this pretrained model for another target task, ranging from image classification of smaller datasets to more complex tasks like object detection and image segmentation.

Refining the ImageNet pretrained model can be done in several ways. In our work we focus on two common methods:

- Fixed-feature transfer: we replace the last layer of the neural network with a new layer that fits the target task. Then we train the last layer on the target dataset while keeping the rest of the layers fixed.

- Full-network transfer: we do the same as in fixed-feature, but instead of fine-tuning the last layer only, we fine-tune the full model.

The full-network transfer setting typically outperforms the fixed-feature strategy in practice.

Spotlight: On-demand video

How can we improve transfer learning?

The performance of the pretrained model on the source tasks plays a major role in determining how well it transfers to the source tasks. In fact, a recent study by Kornblith, Shlens, and Le (opens in new tab) finds that a higher accuracy of pretrained ImageNet models leads to better performance on a wide range of downstream classification tasks. The question that we would like to answer here is whether improving the ImageNet accuracy of the pretrained model is the only way to improve its transfer learning.

After all, our goal is to learn broadly applicable features on the source dataset that can transfer to target datasets. ImageNet accuracy likely correlates with the quality of features that a model learns, but it may not fully capture the downstream utility of those features. Ultimately, the quality of learned features stems from the priors we impose on them during training. For example, there have been several studies of the priors imposed by architectures (such as convolutional layers (opens in new tab)), loss functions (opens in new tab), and data (opens in new tab) augmentation (opens in new tab) on network training.

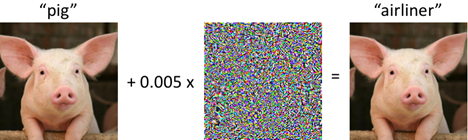

In our paper “Do Adversarially Robust ImageNet Models Transfer Better?” (opens in new tab) we study another prior: adversarial robustness, which refers to a model’s invariance to small imperceptible perturbations of its inputs, namely adversarial examples (opens in new tab). It is well known by now that standard neural networks are extremely vulnerable to such adversarial examples. For instance, Figure 2 shows that a tiny perturbation (or change) of the pig image, a pretrained ImageNet classifier will mistakenly predict it as an “airliner” with very high confidence:

Adversarial robustness is therefore typically enforced by replacing the standard loss objective with a robust optimization (opens in new tab) objective:

\[\min_{\theta} \mathbb{E}_{(x,y)\sim D}\left[\mathcal{L}(x,y;\theta)\right] \rightarrow \min_{\theta} \mathbb{E}_{(x,y)\sim D} \left[\max_{\|\delta\|_2 \leq \varepsilon} \mathcal{L}(x+\delta,y;\theta) \right].\]This objective trains models to be robust to worse-case image perturbations within an \(\ell_2\) ball around the input. The hyperparameter \(\varepsilon\) governs the intended degree of invariance to the corresponding perturbations. Note that setting \(\varepsilon=0\) corresponds to standard training, while increasing ε induces robustness to increasingly large perturbations.

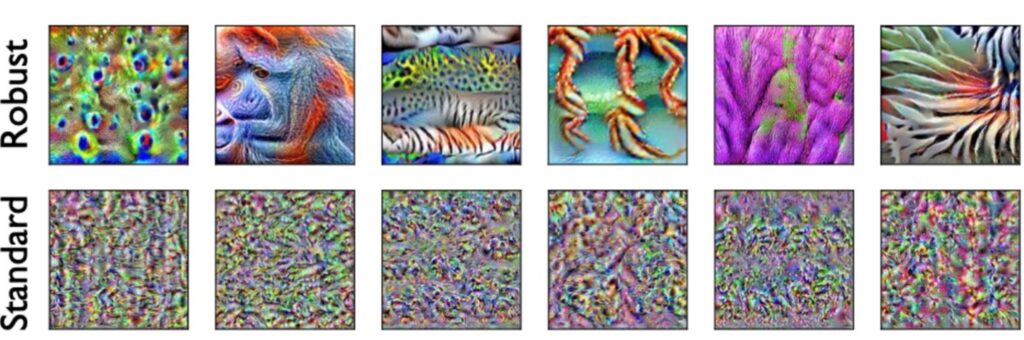

Adversarial robustness has been initially studied solely through the lens of machine learning security, but recently a line of (opens in new tab) work (opens in new tab) studied the effect of imposing adversarial robustness as a prior on learned feature representations. These works have found that although these adversarially robust models tend to attain lower accuracies than their standardly trained counterparts, their learned feature representations carry several advantages over those of standard models. These advantages include better-behaved (opens in new tab) gradients (opens in new tab) (see Figure 3), representation invertibility (opens in new tab), and more specialized features (opens in new tab). These desirable properties might suggest that robust neural networks are learning better feature representations than standard networks, which could improve the transferability of those features.

Adversarial robustness and transfer learning

To sum up, we have two options of pretrained models to use for transfer learning. We can either use standard models that have high accuracy but little robustness on the source task; or we can use adversarially robust models, which are worse in terms of ImageNet accuracy but are robust and have the “nice” representational properties (see Figure 3). Which models are better for transfer learning?

To answer this question, we trained a large number of standard and robust ImageNet models. (All models are available for download via our code/model release (opens in new tab), and more details on our training procedure can be found there and in our paper (opens in new tab).) We then transferred each model (using both the fixed-feature and full-network settings) to 12 downstream classification tasks and evaluated the performance.

We find that adversarially robust source models almost always outperform their standard counterparts in terms of accuracy on the target task. This is reflected in the table below, in which we compare the accuracies of the best standard model and the best robust model (searching over the same set of hyperparameters and architectures):

| Dataset | |||||||||||||

| Mode | Model | Aircraft | Birdsnap | CIFAR-10 | CIFAR-100 | Caltech-101 | Caltech-256 | Cars | DTD | Flowers | Food | Pets | SUN397 |

| Fixed-feature | Robust Standard | 44.14 38.69 | 50.72 48.35 | 95.53 81.31 | 81.08 60.14 | 92.76 90.12 | 85.08 82.78 | 50.67 44.63 | 70.37 70.09 | 91.84 91.90 | 69.26 65.79 | 92.05 91.83 | 58.75 55.92 |

| Full-network | Robust Standard | 86.24 86.57 | 76.55 75.71 | 98.68 97.63 | 89.04 85.99 | 95.62 94.75 | 87.62 86.55 | 91.48 91.52 | 76.93 75.80 | 97.21 97.04 | 89.12 88.64 | 94.53 94.20 | 64.89 63.72 |

The following graph shows, for each architecture and downstream classification task, the performance of the best standard model compared to that of the best robust model. As we can see, adversarially robust models improve on the performance of their standard counterparts per architecture too, and the gap tends to increase as the network’s width increases:

We also evaluate transfer learning on other downstream tasks including object detection and instance segmentation, both for which using robustness backbone models outperforms using standard models as shown in the table below:

| Task | Box AP | Mask AP | ||

| Standard Robust | Standard Robust | |||

| VOC Object Detection | 52.80 53.87 | — — | ||

| COCO Object Detection | 39.61 40.13 | — — | ||

| COCO Instance Segmentation | 40.74 41.04 | 36.98 37.23 |

Empirical mysteries and future work

Overall, we have seen that adversarially robust models, although being less accurate on the source task than standard-trained models, can improve transfer learning on a wide range of downstream tasks. In our paper (opens in new tab), we study this phenomenon in more detail. There, we analyze the effects of model width and robustness levels on the transfer performance, and we compare adversarial robustness to other notions of robustness. We also uncover a few somewhat mysterious properties: for example, resizing images seems to have a non-trivial effect on the relationship between robustness and downstream accuracy.

Finally, our work provides evidence that adversarially robust perception models transfer better, yet understanding precisely what causes this remains an open question. More broadly, the results we observe indicate that we still do not yet fully understand (even empirically) the ingredients that make transfer learning successful. We hope that our work paves the way for more research initiatives to explore and understand what makes transfer learning work well.